As students interact with AI tools in schools, it is important to make sure that teachers and school administrators can review and supervise these interactions. In addition to providing educators full access to student-ai chat logs, at Colleague.AI we also create summaries of these conversations to allow educators to quickly see high level insights into what students are discussing with the AI. We’ve engaged in a rigorous research and development process to create these conversation summaries.

Conversation Summaries on Colleague.AI

When your students interact with our AI models in either the AI Tutor or Teaching Aide features, you can easily see a summary of the conversation. Simply navigate to a student conversation and click the ‘Conversation Summary’ button at the top of the page.

Teacher Co-Design Research

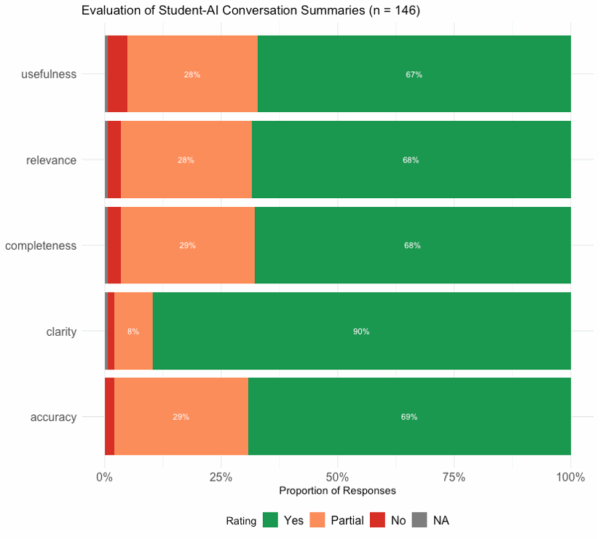

This spring we worked with a group of 20 teachers in a 7 week co-design project where they tested the new Colleague.AI classroom features. During this co-design research process, we asked the teachers to review their students’ conversations with our AI tool, and judge the quality of the generated summaries. Teachers were asked to evaluate the conversation summary on five dimensions:

- Accuracy: Was the summary factually correct based on the student-AI conversation?

- Relevance: Did the summary highlight the most important aspects of the conversation?

- Completeness: Did the summary include all relevant information?

- Clarity: Was the summary easy to understand?

- Useful: Did the summary provide information that is helpful for you as a teacher?

They were asked to score the conversations on a three level scale: “No – several problems”, “Partially – some minor issues”, “Yes – no changes needed”.

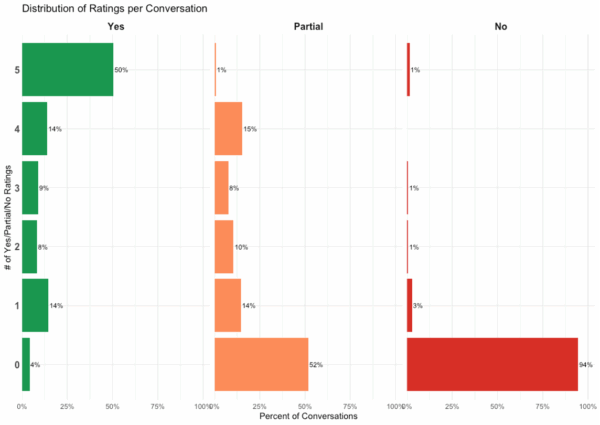

The results were positive! Over 140 student-AI conversation summaries were reviewed and the majority were found to be accurate, relevant, complete, clear, and useful. Half of the conversation summaries were rated ‘Yes’ across all five dimensions and only 4 conversations received a ‘No’ rating on more than one dimension.

Looking at the results by dimension, ninety percent of the conversations were found to be clear. Across the other dimensions, the ratings were evenly distributed, with 2/3s of conversations rated as being useful, relevant, complete, and accurate.

Some of the most common issues included understanding how the AI summary evaluated student engagement in the conversation, issues summarizing very short or non-educational messages from students, and how to represent messages where the students expressed frustration at the AI assistant.

Our research team is hard at work using this evaluation data to update our conversation summaries and Student Growth Insights feature to ensure that it is aligned to the needs of real teachers like you, working with real students.

We love receiving feedback from teachers. Our Share Feedback button is always available for you to let us know how Colleague.AI is working for you.

Authored by Dr. Lief Esbenshade